\(\mu_i\) = Mean of the datasets for specific classįirst we will create a LDA class, implement the logics there and then run different experiments.\(n_i\) = Number of dataset in each class.Programming Language: C++ (Cpp) Namespace/Package Name: eigen. You can rate examples to help us improve the quality of examples. P & = \left ( \frac^C n_i (\mu_i – \mu)(\mu_i – \mu)^T These are the top rated real world C++ (Cpp) examples of eigen::Vector2d extracted from open source projects. We can now rewrite the expression of p as, In this case p and d are orthogonal to each other, hence we can write them as, Suite of 1D, 2D, 3D demo apps of varying complexity with built-in support for sample. We know that if two vectors are orthogonal to each other than the dot product between them will be 0. Rcpp integration for the Eigen templated linear algebra library. Going back to Eigenvectors and Eigenvalues Suppose we have a square represented in 2d space where every point on the square is a vector of which I will be using only 3 vectors as shown below. This is also known as the residual or error vector. The d vector is the perpendicular distance between x and w. In the below diagram the vector p is called the Orthogonal Projection of x onto w. Now let’s derive the equation for orthogonally projecting a vector x ( input vector ) onto vector w. Assume that we already know w (I will show how to find optimal w later). Hey guys,Welcome to Life Academy This video is about finding the eigen value and eigen vector of a 2 dimensional square matricusing c++ programLike Share. LDA is a supervised algorithm ( Unlike PCA ), hence we need to have the target classes specified.Ĭredit: Above picture has been taken from “Pattern Recognition and Machine Learning” by “Christopher Bishop” Orthogonal Projection:Īs per the objective, we need to project the input data onto w vector.

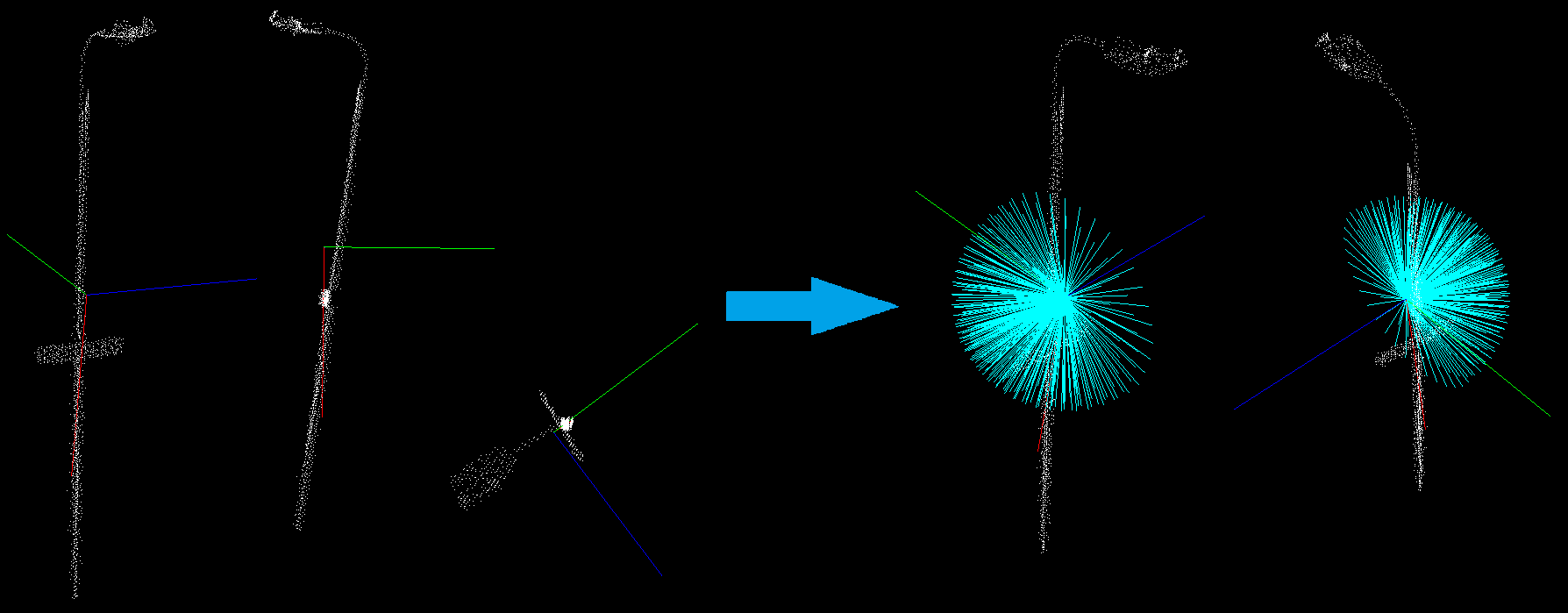

Refer the below diagram for a better idea, where the first plot shows a non-optimal projection of the data points and the 2nd plot shows an optimal projection of the data point so that the classes are well separated. Beware, however, that row-reducing to row-echelon form and obtaining a triangular matrix does not give you the eigenvalues, as row-reduction changes the eigenvalues of the matrix in general. The basic idea is to find a vector w which maximizes the separation between target classes after projecting them onto w. matrices and vectors which are both represented by the template class Matrix, and general 1D and 2D arrays represented by the template class Array. The eigenvalues are immediately found, and finding eigenvectors for these matrices then becomes much easier. Linear Discriminant Analysis can be used for both Classification and Dimensionality Reduction.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed